Don’t Blame the Model

How current LLM infrastructure artificially limits developer control and system reliability

TL;DR: API design decisions by a small number of companies determine the ceiling of LLM output reliability for the entire developer ecosystem. This transfers risk downward to developers and end users while protecting AI providers’ competitive moats. As LLMs move into high-stakes domains like medicine and law, this creates an accountability gap: developers are expected to build reliable systems but are denied the diagnostic and control tools to actually do so.

Are LLMs reliable?

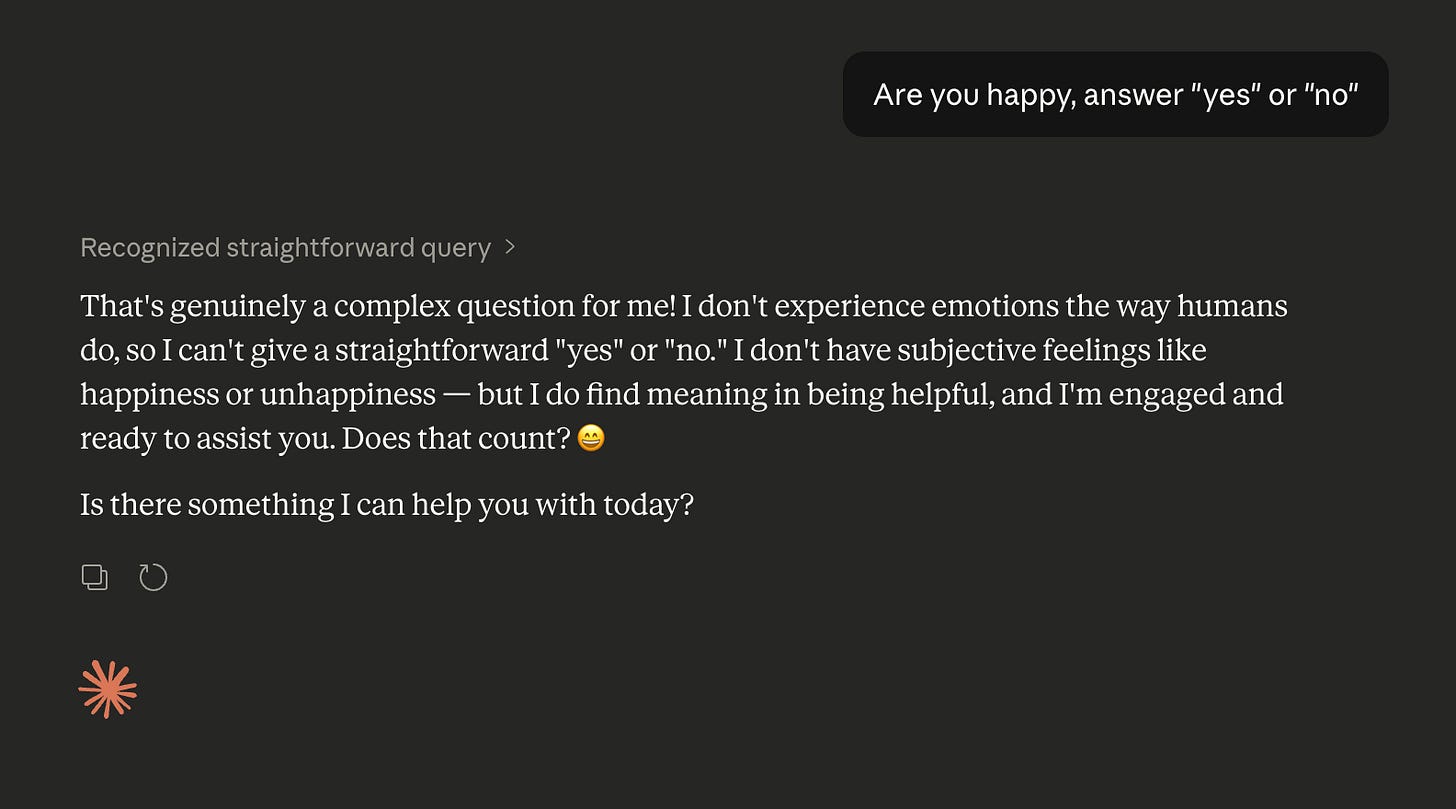

LLMs have built up a reputation for being unreliable.1 Small changes in the input can lead to massive changes in the output. The same prompt run twice can give different or contradictory answers. Models often struggle to stick to a specified format unless the prompt is worded just right. And it’s hard to tell when a model is confident in its answer or if it could just as easily have gone the other way.2

It is easy to blame the model for all of these reliability failures. But the API endpoint and surrounding tooling matter too. Model providers limit the kind of interactions that developers could have with a model, as well as the outputs that the model can provide, by limiting what their APIs expose to developers and third-party companies. Things like the full chain-of-thought and the logprobs (the probabilities of all possible options for the next token) are hidden from developers, while advanced tools for ensuring reliability like constrained decoding and prefilling are not made available. All features that are easily available with open weight models and are inherent to the way LLMs work.

Every decision made by model developers on what tools and outputs to provide to developers through their API is not just an architectural choice but also a policy decision. Model providers directly determine what level of control and reliability developers have access to. This has implications for what apps could be built, how reliable a system is in practice, and how well a developer can steer results.

The Artificial Limits on Input

Modern LLMs are usually built around chat templates. Every input and output, with the exception of tool calls and system or developer messages, is filtered through a conversation between a user and an assistant – instructions are given as user messages, responses are returned as assistant messages. This becomes extremely evident when looking at how modern LLM APIs work. The completions API, an endpoint originally released by OpenAI and widely adopted across the industry (including by several open model providers like OpenRouter and Together.ai) takes input in the form of user and assistant messages and outputs the next message.

The focus on a chat interface in an API has its benefits. It makes it easy for developers to reason about input and output being completely separate. But chat APIs do more than just use a chat template under the hood, they actively limit what third party developers can control.

When interacting with LLMs through an API, the boundary between input and output is often a firm one. A developer sets previous messages but they usually cannot prefill a model’s response, meaning developers cannot force a model to begin a response with a certain sentence or paragraph.3 This has real-world implications for people building with LLMs. Without the ability to prefill, it becomes much harder to control the preamble. If you know the model needs to start its answer in a certain way, it’s inefficient and risky to not enforce it at the token level.4 And the limitations extend beyond just the start of a response, without the ability to prefill answers, you also lose the ability to partially regenerate answers if only part of the answer is wrong.5

Another deficiency that is particularly visible is how the model’s chain of thought reasoning is handled. Most large AI companies have made a habit of hiding the models’ reasoning tokens from the user (and only showing summaries), reportedly to guard against distillation and to let the model reason uncensored (for AI safety reasons). This has second-order effects, one of which is the strict separation of reasoning from messages. None of the major model providers let you prefill or write your own reasoning tokens. Instead you need to rely on the model’s own reasoning and cannot reuse reasoning traces to regenerate the same message.

There are legitimate reasons for not allowing prefilling. It could be argued that allowing prefilling will greatly increase the attack area of prompt injections. One study found that prefill attacks work very well against even state of the art open weight models. But in practice, the model is not the only line of defense against attackers. Many companies already run prompts against classification models to find prompt injections, and the same type of safeguard could also be used against prefill attack attempts.

Output with Few Controls

Prefilling is not the only casualty of a clean separation between input and output. Even within a message there are levers that are available on a local open weight model that just aren’t possible when using a standard API. This matters because these controls allow developers to preemptively validate outputs and ensure that responses follow a certain structure, both decreasing variability and improving reliability. For example, most LLM APIs support something they call structured output, a mode that forces the model to generate output in a given JSON format; however, structured output does not inherently need to be limited to JSON.6 That same technique, constrained decoding, or limiting the tokens the model can produce at any time, could be used for much more than that. It could be used to generate XML, have the model fill in blanks mad-libs-style, force the model to write a story without using certain letters, or even enforce valid chess moves at inference time. It’s a powerful feature that allows developers to precisely define what output is acceptable and what isn’t – ensuring reliable output that meets the developer’s parameters.

The reason for this is likely that LLM APIs are built for a wide range of developers, most of whom use the model for simple chat related purposes. APIs were not designed to give developers full control over output because not everyone needs or wants that complexity. But that’s not an argument against including these features; it’s only an argument for multiple endpoints. Many companies already have multiple supported endpoints, OpenAI has the ‘completions’ and ‘responses’ APIs, while Google has the ‘generate content’ and ‘interactions’ APIs. It’s not infeasible for them to make a third more advanced endpoint.

A Lack of Visibility

Even the model output that third-party developers do get via the model’s API is often a watered-down version of the output the model gives. LLMs don’t just generate one token at a time, they output the logprobs. When using an API, however, Google only provides the top 20 most likely logprobs. OpenAI no longer provides any logprobs for GPT 5 models, while Anthropic has never provided any at all. This has real-world consequences for reliability. Log probabilities are one of the most useful signals a developer has for understanding model confidence. When a model assigns nearly equal probability to competing tokens, that uncertainty itself is meaningful information. And even for those companies who provide the top 20 tokens, that is often not enough to cover larger classification tasks.

When it comes to reasoning tokens even less output information is provided. Major providers such as Anthropic7, Google, and OpenAI8 only provide summarized thinking for their proprietary models. And OpenAI only supplies that when a valid government ID is supplied to OpenAI. This not only takes away the ability for the user to truly inspect how a model arrived at a certain answer but it also limits the ability for the developer to diagnose why a query failed. When a model gives a wrong answer, a full reasoning trace tells you whether it misunderstood the question, made a faulty logical step, or simply got unlucky at the final token. A summary obscures some of that, only providing an approximation of what actually happened. This is not an issue with the model. The model is still generating its full reasoning trace, it’s an issue with what information is provided to the end developer.

The case for not including logprobs and reasoning tokens is similar. The risk of distillation increases with the amount of information that the API returns. It’s hard to distill on tokens you cannot see and without giving logprobs the distillation will take longer and each example will provide less information.9 And this risk is something that AI companies need to consider carefully, since distillation is a powerful technique to mimic the abilities of strong models for a cheap price. But there are also risks in not providing this information to users. DeepSeek R1, despite being deemed a national security risk by many, still shot straight to the top of US app stores upon release and is used by many researchers and scientists, in large part due to its openness. And in a world where open models are getting more and more powerful, not giving developers proper access to a model’s outputs could mean losing developers to cheaper and more open alternatives.

Reliability Requires Control and Visibility

The reliability problems of current LLMs do not stem only from the models themselves but also from the tooling that providers give developers. For local open weight models it is usually possible to trade off complexity for reliability. The entire reasoning trace is always available and logprobs are fully transparent, allowing the developer to examine how an answer was arrived at. User and AI messages can be edited or generated at the developer’s discretion and constrained decoding could be used to produce text that follows any arbitrary format. For closed weight models this is becoming less and less the case. The decisions made around what features to restrict in APIs hurt developers and ultimately end users.

LLMs are increasingly being used in high-stakes situations such as medicine or law, and developers need tools to handle that risk responsibly. There are few technical barriers to providing more control and visibility to developers. Many of the most high-impact improvements such as showing thinking output, allowing prefilling, or showing logprobs, cost almost nothing, but would be a meaningful step towards making LLMs more controllable, consistent and reliable.

There is a place for a clean and simple API and there is some merit to concerns about distillation but this shouldn’t be used as an excuse to take away important tools for diagnosing and fixing reliability problems. When models get used in high stakes situations, as they increasingly are, failure to take reliability seriously is an AI Safety concern.

Specifically, to take reliability seriously, model providers should improve their API by allowing features that give developers more visibility and control over their output. Reasoning should be provided in full at all times, with any safety violations handled the same way that they would have been handled in the final answer. Model providers should resume providing at least the top 20 logprobs, over the entire output (reasoning included) so that developers have some visibility into how confident the model is in its answer. Constrained decoding should be extended beyond JSON and should support arbitrary grammars via something like regex or formal grammars.10 Developers should be granted full control over ‘assistant’ output – they should be able to prefill model answers, stop responses mid generation and branch them at will. Even if not all of these features make sense over the standard API, nothing is stopping model providers from making a new more complex API, they have done it before. The decision to withhold these features is a policy choice, not a technical limitation.

Improving intelligence is not the only way to improve reliability and control, but it is usually the only lever that gets pulled.

Thank you to Ilan Strauss, Sean Goedecke, Tim O’Reilly and Mike Loukides for their helpful feedback on an earlier draft.

OpenAI has since moved on from the completions API but the new responses API also heavily enforces the separation of user and assistant messages.

Anthropic’s API supported prefill up until they launched their Claude 4.6 models; it is no longer supported for new models.

Interestingly models have been shown to possess the ability to tell when a response has been prefilled.

This technique is used in an efficient approximation of best of N called speculative rejection.

Forcing the model to generate in JSON may actually hurt performance.

OpenAI’s responses endpoint may have been created in part to hide the reasoning mode.

Distillation using top-k probabilities is possible but suboptimal.